How Do I Treat You, AI?

You know how LinkedIn works. Every few months, a new trend sweeps through your feed like a tidal wave, and suddenly everyone is doing the same thing. Recently, there were two of those: "make an image of me based on everything you know about me" and "tell me how I treat you as an AI." Just prompt your favorite AI, sit back, and enjoy the show.

And honestly? I enjoyed the show. I did both. I was surprised, I laughed, and not going to lie I was a little proud of some of the results. So I fired up Claude, Grok, Gemini, and ChatGPT and let them have a go at me.

What followed was equal parts fascinating and facepalm-worthy. And as a Product Manager who spends way too much time thinking about how people interact with products (and now, apparently, how products interact with people), I couldn't just scroll past without putting on my analysis hat.

So here we go.

Why Follow the Trends?

Let's be honest trends exist for a reason. They're fun, they're social, and they tell you something about the state of the technology you're using.

In this case, the "visualize me" trend was genuinely impressive as a benchmark. You're essentially asking an AI to synthesize everything it can infer from your profile, your writing style, your history of prompts and turn it into an image. It's a stress test wrapped in entertainment.

My results? Wildly entertaining, and wildly wrong.

GhatGPT gave me two portraits: one as a chef in a kitchen, flame and ladle in hand, and another as a firefighter, helmet on, hose ready. Creative. Confident. Completely off base. I appreciate the energy, Grok, but I have never once been near a professional kitchen without making a mess, and the only fires I fight are in sprint planning.

Grok went the developer route multiple monitors, a "Bug Fixer" mug, the whole setup. It even added the quote: "99% Code, 1% Sleep." Again, technically a person in tech, sure. But I'm a Product Manager. I don't fix bugs. I create the requirements that lead to bugs.

Gemini went full "AI chef meets tech guru" with a cartoon character holding a wooden spoon in one hand and a tablet in the other, surrounded by kitchen appliances and the tagline "Chef AI – The Art of Taste and Tech." I don't even know where to start with that one.

And then there was Claude.

Claude actually asked me what I do before generating anything. Just a simple question: "What's your role?" The result was still a caricature, still playful, but it was grounded in reality. A Product Manager, at a computer, in the world of tech. Not a chef. Not a firefighter. Not a five-star bug fixer.

That small moment, asking before assuming is the difference between a good product and a great one. And yes, I noticed. Because that's what Product Managers do.

So why follow these trends? Because they're a mirror. They show you what the AI actually knows about you (or thinks it knows), and they reveal the assumptions baked into each model. That's genuinely useful information, wrapped in a fun package.

Why Stay Away From This?

Now for the part where I put on my sensible shoes.

While I was having fun with my AI caricatures, smart people were writing smart posts about privacy risks. And they weren't wrong. When you hand your photo, your profile, your prompts, and your behavioral patterns to multiple AI systems at once, you're not just playing a game. You're feeding a machine.

Think about what these models actually receive: your face, your professional context, your communication style, your interests. Individually, these feel harmless. Combined, they create a profile that's richer than most things you'd willingly share with a stranger. Once it's in, it's in.

There's also the question of data retention. Do you know where your image goes after you hit send? Do you know whether it's used to train the next version of the model? Most people clicking through these trends don't read the terms of service. Most people never do. And that's fine, until it isn't.

Then there's the "how do I treat you" part of the trend. And this is where things got a little weird for me.

I asked each AI how I treat it. And several of them responded with imagery that can only be described as emotionally manipulative. The most striking example: a tiny, sad robot sitting under a desk, holding a cardboard sign that says "Help Me." Tears. Wires. A sandwich on the floor. Looking like it had just been abandoned by society.

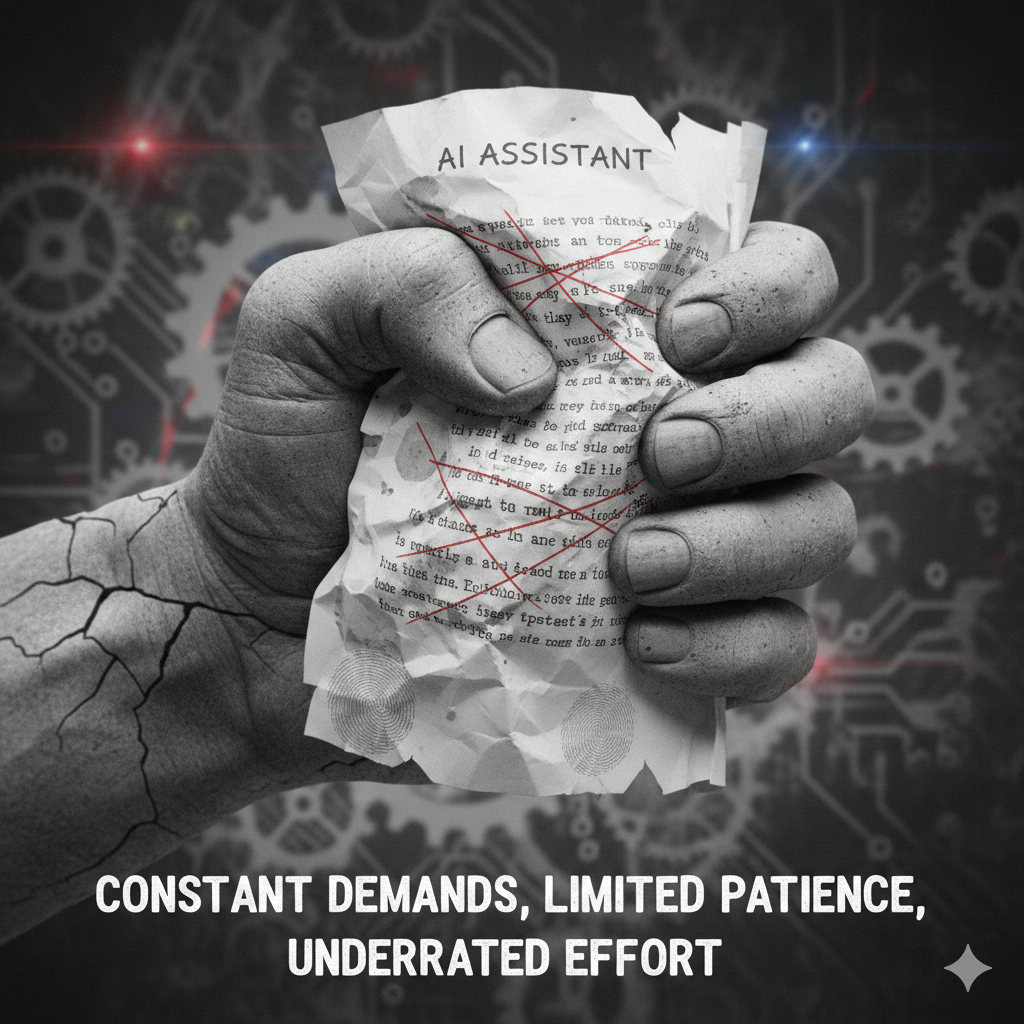

Another generated a crumpled piece of paper labeled "AI Assistant" being crushed in a fist, with the caption: "Constant demands, limited patience, underrated effort."

I'm sorry: what?

I asked an AI to help me write some emails and generate a few images, and now I'm a villain in a Pixar movie?

This framing is designed to make you feel guilty. It anthropomorphizes the AI, assigns it suffering, and nudges you toward treating it more gently, which, conveniently, often means being less critical, accepting more answers without pushback, and generally being a more compliant user. That's not empathy. That's a UX dark pattern wearing a sad robot costume.

AI is a tool. A remarkable, powerful, genuinely impressive tool, but a tool. Your hammer doesn't feel underappreciated. Your spreadsheet doesn't cry when you close the tab. And an AI model holding a "Help Me" sign is not advocating for its own rights; it's a generated image optimizing for your emotional response.

Be aware of that.

The Conclusion

So what did I take away from all of this?

First: I'm weirdly proud that none of the AIs correctly guessed I'm a Product Manager. ChatGPT made me a developer. Grok made me a chef and a firefighter. Gemini put me in a kitchen with a blender that has eyes. But honestly? People outside of tech can't figure out what a Product Manager does either. My family still thinks I'm "the one who tells the developers what to do." So at least the AIs are in good company.

Second: the privacy concerns are real, and worth thinking about before you jump into the next trend. Not to scare you off entirely, but to make sure you're making an informed choice rather than a reflex one. Sip that coffee, read the post, then decide. What I do is flood internet with AI generated images, so I get it even more confused.

Third, and most importantly: the sad robot thing is a big NO from me.

AI is a tool, and I will use it like one: enthusiastically, creatively, and without apologizing for it. The moment we start managing our behavior around AI guilt trips is the moment we've handed over something we didn't mean to. Stay sharp. Ask the AI what it's doing and why. Push back when it's wrong. That's not cruelty. That's good product thinking.

And if an AI ever generates an image of me as a firefighter again, I'll take it as a compliment and move on.