The 5% Reality: What the Data Actually Says About AI and Your Job?

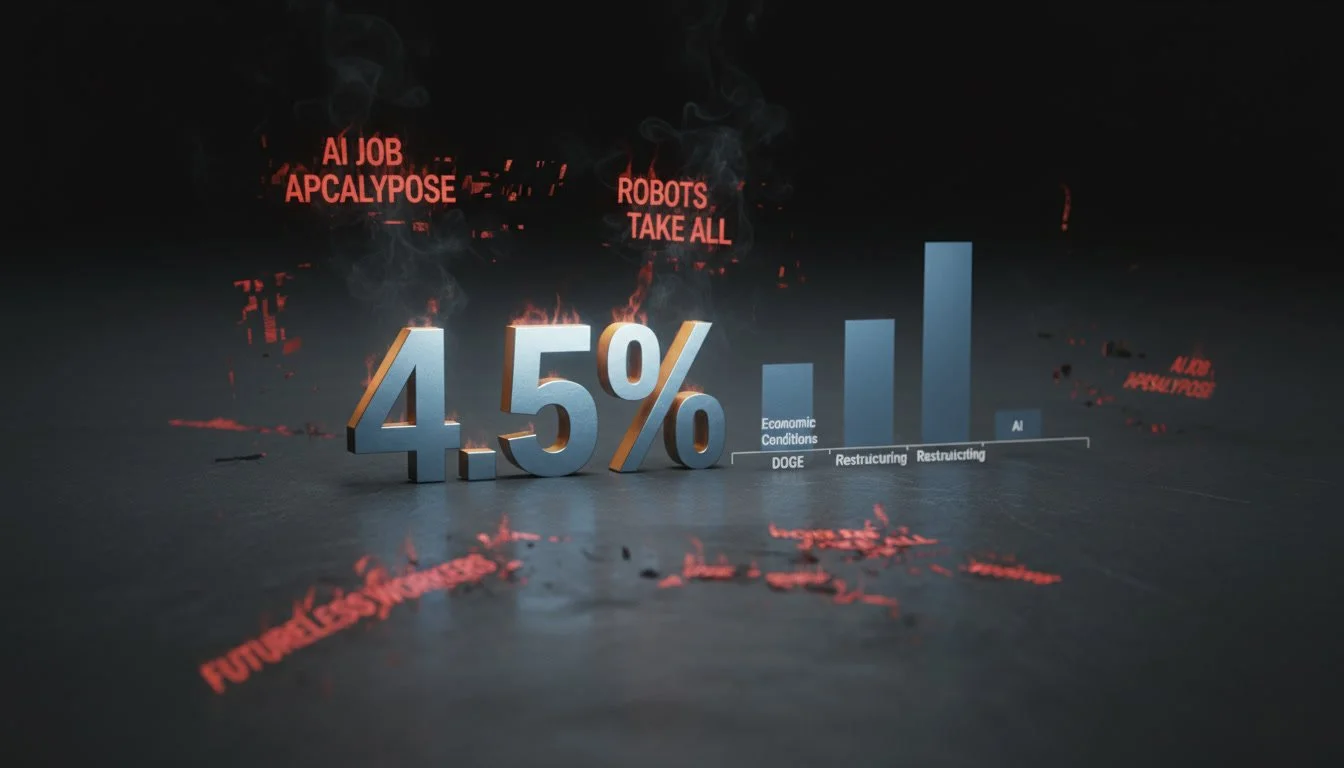

AI is the most discussed force reshaping the modern workplace. It is also, according to the most current data available, responsible for roughly 4.5% of all US layoffs in 2025.

Sit with that for a moment.

Every week, LinkedIn surfaces a new wave of existential dread. Keynote stages fill up with executives warning of transformation at scale. Consultancies publish reports suggesting that 40%, 50%, even 80% of jobs are "exposed" to AI disruption. And somewhere in the middle of all that noise, a mid-career professional quietly wonders whether their role will still exist in three years.

The fear is real. The data, however, tells a very different story, and as a product manager, I think we owe it to ourselves to read it properly.

What the Research Is Actually Telling Us?

I spend a lot of time with data at work. I do enjoy spreadsheets for their own sake, but because data is the fastest way to cut through the noise in a room full of opinions. And right now, the room is very loud.

Let me share what I found when I actually went looking.

In October 2025, the Budget Lab at Yale published an analysis of 33 months of US labour data, covering the entire period since ChatGPT went public in November 2022. If AI were reshaping employment at the scale people claim, you'd see it here. The finding? The share of workers in high, medium, and low AI-exposure jobs has barely moved. And among people who are actually unemployed, the first group you'd expect to show signs of displacement, there is no meaningful increase in AI exposure. Martha Gimbel, who led the research, put it plainly: "No matter which way you look at the data, at this exact moment, it just doesn't seem like there's major macroeconomic effects here."

Brookings looked at the same numbers and reached the same conclusion. The pace of occupational change since ChatGPT arrived is only marginally faster than it was after computers and the internet became mainstream. And even that slight acceleration appears to predate ChatGPT's release, meaning AI may not even be the primary driver of the shift we are seeing.

Then there is the MIT estimate from November 2025, which calculated that current AI systems can theoretically handle the tasks of around 12% of the workforce. Theoretically. Whether businesses would actually deploy that automation, given the cost, the reliability issues, and the complexity of real work environments, is an entirely different question. And historically, "theoretically automatable" and "actually automated" have been very different things.

None of this means nothing is changing. It means the change is slower, more specific, and more conditional than the headlines suggest. That distinction matters, because how you respond to a genuine specific risk is very different from how you respond to a generalised panic.

The Layoff Data and What It Is Really Telling Us?

If AI were truly decimating the workforce, I would see it in the numbers. I do not.

Challenger, Gray & Christmas the outplacement firm that tracks US employer-announced layoff plans, recorded that AI was cited as a contributing factor in approximately 55,000 job cuts across the first eleven months of 2025. That figure represents around 4.5% of total reported job cuts in that period. By contrast, market and economic conditions drove over 245,000 layoffs. DOGE-related government cuts topped 300,000.

But here is where it gets more honest.

Sam Altman himself, speaking at the India AI Impact Summit in February 2026, acknowledged openly that "there's some AI washing where people are blaming AI for layoffs that they would otherwise do." Oxford Economics, reviewing the same Challenger data, noted that many firms are "trying to dress up layoffs as a good news story rather than bad news, such as past over-hiring." A National Bureau of Economic Research study published in early 2026 found that nearly 90% of C-suite executives surveyed across the US, UK, Germany, and Australia said AI had no impact on workplace employment over the past three years.

This is the villain hiding in plain sight. Not AI, but the corporate performance of AI-driven change, where the technology becomes a convenient framing device for financial decisions, overextension corrections, and strategic pivots that had nothing to do with algorithms. When a company that over-hired in 2021 announces "efficiency gains through AI" in 2025, that is not a data point about AI disruption. It is a data point about narrative management.

The Number That Put It in Perspective for Me

This is the piece of research that actually stopped me mid-scroll.

Scale AI and the Center for AI Safety ran a study that I think deserves far more attention than it got. They collected 240 real freelance work projects, the kind of tasks that actual businesses had posted on freelancing platforms and paid humans to complete. Product design. Game development. Data analysis. Scientific writing. Architecture. Video animation. Real work, with real quality standards, judged by a panel of 40 human evaluators.

Then they gave the same projects to the best AI models available right now. Manus. Grok. Claude Sonnet. ChatGPT. Gemini 2.5 Pro.

The result? The top performer, Manus, successfully completed 2.5% of projects to a standard a client would actually accept. Gemini came in under 1%. The rest sat somewhere in between. Nearly half of all attempts produced poor quality work. More than a third were left incomplete. And roughly one in five had basic technical problems, corrupted files, broken outputs, fundamental errors.

The researchers' conclusion was unambiguous: current models are not close to being able to automate real jobs in the economy.

What struck me about this was not the low number itself. It was why the models failed. Not on the hard, abstract, philosophical tasks you might imagine AI struggling with. On the practical, messy, real-world execution of actual work. A floor plan that looked plausible but was architecturally wrong. A game that ran but ignored the brief. An animation that was technically generated but not what the client asked for. The failures were, as one researcher put it, "kind of prosaic."

This is the thing the benchmark scores do not capture and benchmarks are what most of the confident predictions about AI capabilities are built on. Scoring well on a controlled test and doing someone's actual job are not the same thing. I know this from product. A feature can pass every acceptance criterion in a controlled environment and still fail in the hands of a real user with a real problem and a real deadline.

The 240 tasks study is the closest thing I have seen to a real-world acceptance test for AI as a workforce replacement. It failed at a 97.5% rate.

How I Actually Think About This (As a PM)?

In my day-to-day work, one of the most useful habits that I try to develop is the discipline of separating signal from noise before I act on anything. It sounds obvious. In practice, it is harder than it looks, especially when the noise is loud, confident, and coming from people with large LinkedIn followings.

The way I approach it in a product context is straightforward. I ask: is this input changing the actual behaviour of users, or is it just changing the volume of conversation about users? Those are not the same thing. Loud ≠ real. Frequent ≠ important.

I apply the same lens to the AI and jobs conversation.

When I look at my own role and honestly, when I look at most mid-career knowledge worker roles, the signal worth paying attention to is specific, not general. Is a meaningful portion of what I do today high-volume, repetitive, and rule-based, the kind of task where AI already performs well in controlled conditions? If yes, that is worth a hard look. If what I mostly do involves judgment calls, stakeholder navigation, ambiguous requirements, and context that lives in my head from three years of working with this team, AI is not close to that. The 240 tasks study confirmed it.The Yale data confirmed it. The layoff numbers confirm it.

The second thing I ask is: what is the source of this signal, and what is their incentive? In product, we call out HiPPO risk: the Highest Paid Person's Opinion crowding out actual evidence. The AI panic ecosystem has its own version of this. Consultancies selling transformation roadmaps. Executives managing investor narratives. Content creators whose engagement peaks when your anxiety peaks. When I see a claim about AI disruption, I now ask: who benefits if I believe this?

And the third question, the one that actually moves me to action is: what specifically should I do differently in the next 90 days, given what the data actually shows? Not in response to the headline. In response to the evidence.

For most mid-career professionals right now, the honest answer to that question is less dramatic than the LinkedIn feed would have you believe. Learn the tools. Understand what they can and cannot do. Apply them to the parts of your work where the economics actually make sense. And invest the time you free up into the judgment, relationships, and context that AI cannot replicate, because that is where your actual value has always lived.

Where That Leaves Me?

I do not think the fear is irrational. I think it is misdirected.

When I look at the data honestly, Yale's 33 months of labour analysis, the Challenger layoff numbers, the 240 tasks study, what I see is not evidence that AI is harmless. What I see is evidence that the threat is specific, conditional, and slower-moving than the narrative suggests. Those are three very different things from "nothing to worry about."

The professionals I am genuinely concerned about are not the ones worrying about AI. They are the ones who have decided, based on a number that sounds reassuring, to stop paying attention. The 2.5% figure from the Scale AI study is not a green light, it is a snapshot of where we are in early 2026. The trajectory is real even if the current impact is not. The time to build new skills is now, not when the numbers shift.

But I am equally concerned about the professionals who have let the noise become the frame for every career decision they make. Who are so focused on what AI might do to their job that they have stopped doing the work of evolving it. That is its own kind of risk and it does not show up in any layoff dataset.

The data gives us something better than panic, and better than complacency. It gives us precision. A specific picture of where the risk actually lives, what it actually looks like, and what a proportionate response to it actually is. That is all I have ever wanted from a good piece of research, something that helps me make a better decision than I would have made without it.

That is what I am trying to pass on here.